Designing the AI Curriculum of the Next Five Years

A Human-First Approach

It is time to have a more effective conversation about artificial intelligence in schools. So far, much of the debate has focused on tools, detection, and adaptation: how students use AI, how teachers can recognise AI-generated work, and how institutions can respond. While these are relevant concerns, they do not address the deeper transformation already taking place in the classroom.

The real shift is not technological. It is cognitive.

According to Ron Aboodi, students are not only using AI for greater efficiency but are also starting to rely on it for guidance in critical thinking situations, sometimes without applying enough independent judgment. The answers they produce may appear correct, structured, and even sophisticated. What remains invisible is the process behind them: the questions not asked, the reasoning not developed, the uncertainty not experienced. And yet, it is precisely in that invisible layer that learning happens.

This is where the risk lies.

If we reduce AI in education to a question of use—allowed or not, detected or undetected—we miss the central issue. The presence of AI is not, in itself, a threat. The gradual disengagement from thinking is.

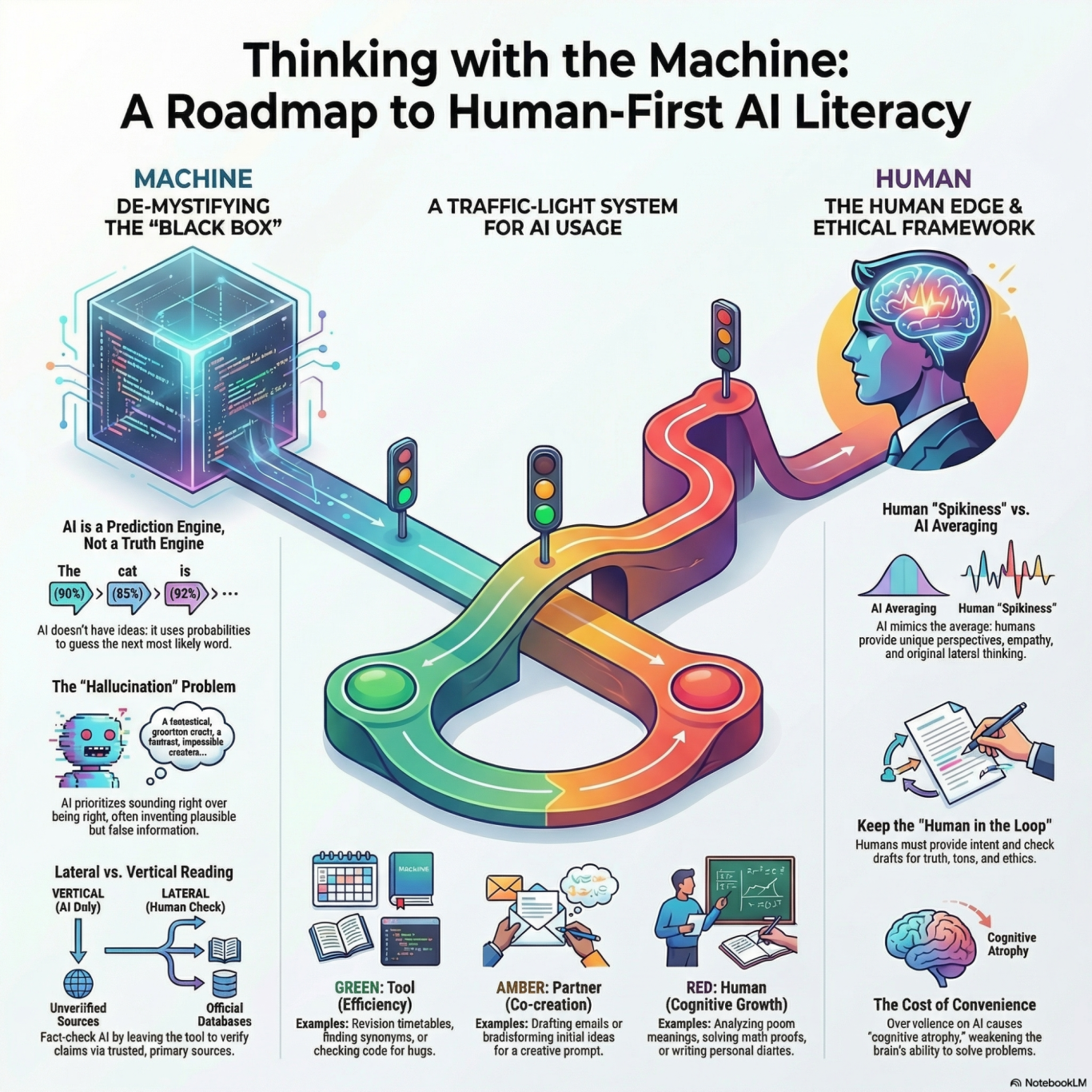

For this reason, I believe we need to rethink what we mean by AI literacy in schools. Teaching students how to use tools is not enough. In fact, it may even be insufficient if it is not accompanied by a deeper understanding of how these systems work and what their limitations are. AI systems do not think, understand, or know. They generate outputs based on patterns in data. This makes them powerful, but also fundamentally limited. They can produce plausible answers that are incorrect, incomplete, or biased, and without critical engagement, these outputs are easily accepted as reliable.

What we need, therefore, is a generation of responsible thinkers, not a generation of efficient users.

In the framework I have been developing for secondary education, I propose a shift from the idea of the student as a “user” of AI to the student as an “architect” of the interaction. The distinction is not semantic. A user asks and receives. An architect questions, guides, evaluates, and remains accountable for the outcome. This shift places the human back at the centre of the process, where it belongs.

Within this perspective, AI becomes a tool that supports thinking, rather than replacing it. Students are encouraged to remain actively involved at every stage of the interaction: to verify outputs, challenge inconsistencies, and bring their own knowledge and perspective to the result. The goal is not to avoid AI, but to ensure that its use does not erode the very skills education is meant to cultivate.

This also requires clarity about what remains distinctly human. AI systems excel at speed, structure, and pattern generation. Humans, however, retain the ability to interpret context, exercise judgment, create meaning, and produce original thought. An effective curriculum must recognise this distinction and actively protect it.

The proposal I am putting forward is therefore not about integrating AI as an additional subject, but about embedding a new layer of awareness across existing practices. Students need to understand not only what AI can do, but also how it influences their thinking, learning, and knowledge production. They need frameworks that help them navigate this interaction consciously, rather than passively.

Over the next five years, schools will inevitably incorporate AI into their systems and practices. Hence, the question is no longer whether this will happen, but how. We have the opportunity to shape this transition in a way that strengthens, rather than weakens, students’ cognitive and ethical development.

This proposal is a contribution to that direction. It is part of an ongoing effort to design an approach to AI literacy that is not driven by fear or by enthusiasm alone, but by a careful consideration of what it means to think, to learn, and to remain human in an increasingly automated environment.

If we get this right, AI will not diminish education. It will make it more intentional.

If we get it wrong, the consequences will not be immediately visible—but they will be profound.